About Sustensis

Sustensis is a not-for-profit Think Tank providing inspirations, suggestions, and solutions for a transition to coexistence with Superintelligence. We must recognize that the scale of the required changes represents a Civilizational Shift in the history of a human species. We invite experts in various domains of knowledge to join us in making this website relevant to people interested in certain aspects of human progress in the context of evolving Artificial Intelligence to ensure that when it emerges as Superintelligence, it will become our friend rather than foe.

The period of this civilisational transition will profoundly affect the way individuals live and relate to one another. The pace of change will be overwhelming, and without a framework for resilience, many people may struggle to adapt fast. Therefore, we have created MetisAI Life Companion, an AI-supported Holistic Wellbeing. It helps people withstand the pressure resulting from the turbulence of the transition period much better. With MetisAI Life Companion they can rediscover who they really are, based on their detailed Life Story and Character Profiling, enabling them to continuously align their fast changing objectives and tasks with their Life Purpose.

Achieving a less-turbulent transition to coexistence with Superintelligence will be much more difficult without a deep reform of democracy. Such a reform must be carried out swiftly and effectively and must be consensual. To facilitate that, we have developed an AI-enabled Consensual Debating approach, supported by most advanced Artificial Intelligence Assistants on our second subsidiary website – Consensus AI. It supports the governance side of a global transformation.

An important part of our work is Transhumanism. Transhumans are people who can make decisions and communicate with computers just by thought using Brain-Computer-Interfaces (BCI). They will soon become far more intelligent than any human. Super fast technological progress will ultimately enable Transhumans to become a new species – Posthumans. Thus for us…

“Transhumanism is about Humanity’s transition to its coexistence with Superintelligence until humans evolve into a new species”.

There is also a comprehensive SEARCH facility to help you access the required information. You can access the content and leave comments without registering. However, to make standalone posts or articles, you need to register. The posts and entries are archived and continuously updated under the main tabs. This website observes the principles of the Global Data Protection Regulation (GDPR) and by entering this site you agree to those principles, which you can view here.

_________________________________________________________________________________________________________________________________________________________________________________________________________________________

LATEST NEWS AND ARTICLES

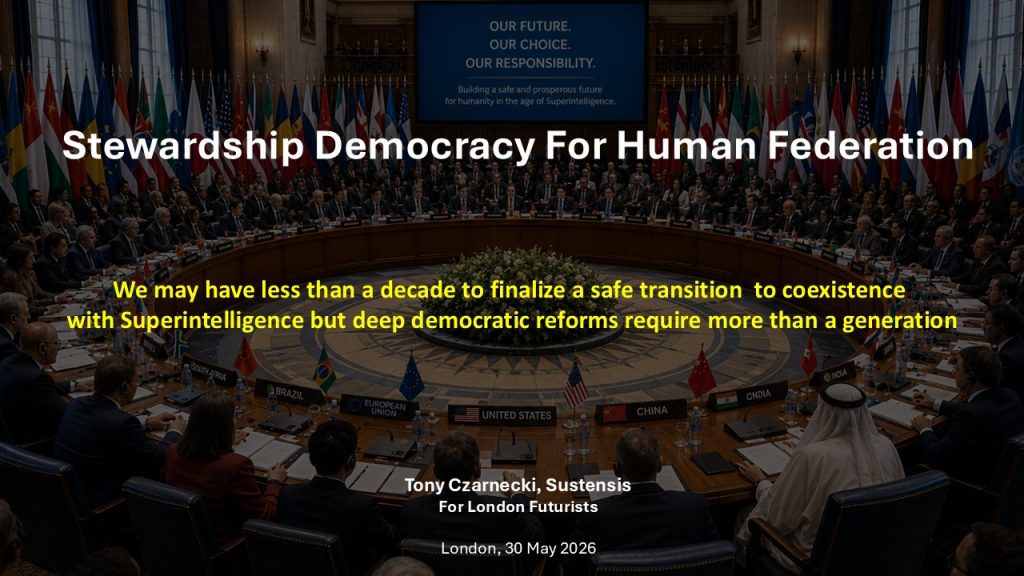

Stewardship Democracy for Human Federation

Enabling a safe civilizational transition to coexistence with Superintelligence

Presentation for London Futurists on Saturday, 30th May at 16:00 BST

Questions , which I will try to answer:

- What if individual powers align Superintelligence mainly with their own national interests?

- What if the United Nations cannot speak for humanity?

- What if democracy has one final task: to give humanity a legitimate voice before the handover to Superintelligence?

- What if party democracies are too polarised and short-term focused to select humanity’s Stewards (trustees)?

- What if the decisive act is not another election, but to define a Testament of Humanity?

My key argument is that ordinary democratic reforms are too slow for humans to control a safe civilizational transition to Superintelligence. The issue is not whether this or that form of democracy is better. The issue is whether humanity can create legitimate, competent and trusted institutions to manage this decisive shift in a democratic way.

Stewardship Democracy, which i propose, is not ordinary politics with better candidates. It is a democratic trusteeship for an extraordinary civilizational transition, designed to protect human freedom, dignity and future autonomy while humanity prepares for coexistence with Superintelligence.

Proposed key steps

- A political model called Stewardship Democracy.

- Democratically chosen Representatives called Stewards, selected for competence, integrity and public responsibility.

- Stewardship Assembly acting as a deliberative parliament.

- Human Federation formed first by a coalition of the willing countries.

- Stewardship Government empowered to manage the transition.

__________________________________________________________________________________________________________________________________________________________________________________________________________________________________________________________________________________

MetisAI Life Companion – Imperial Collaboration Initiative (Meet us at AI Collider, 15 April)

Sustensis has initiated discussions with Imperial College on the creation of a university-based start-up focused on the further development of the MetisAI Holistic Wellbeing methodology and the migration of the existing AI platform.

MetisAI Life Companion is a fully operational AI system supporting a Holistic Wellbeing Method developed at UCL, for those willing to continuously align their Life Purpose, Life Goals, Objectives, and daily actions into a coherent, evolving framework. The system integrates artificial intelligence with behavioural science to support decision-making, personal development, and long-term wellbeing.

The proposed initiative aims to establish an interdisciplinary collaboration involving:

- AI and computer science students and academic focusing on system development and deployment

- Academics across psychology, sociology, medicine, and economics exploring real-world impact

- Industry and public-sector stakeholders evaluating broader applications

Sustensis will contribute:

- The full MetisAI platform and system architecture

- The Holistic Wellbeing methodology

- Existing AI models and interaction frameworks

The objective is to migrate the existing platform and the methodology at create a research-driven startup company within a university context, enabling rapid scaling up, distribution, evaluation and a further development of AI-supported wellbeing.

Th intention is to expand this Initiative into applications across:

- Higher education

- Healthcare and preventive wellbeing

- Public policy and social services

- Workforce and organisational development

We welcome interest from:

- Imperial students and researchers

- Academic collaborators

- Early-stage investors

- Institutional partners

Contact:

Tony Czarnecki

Managing Partner, Sustensis Limited

Email: tony.czarnecki@sustensis.co.uk

https://sustensis.co.uk

From BAD to Bright Future – Presentation for London Futurists

Here is a link to the presentation “From BAD to BRIGHT Future‘ made by Tony Czarnecki, Sustensis Managing Partner for London Futurists on Wednesday 25 February 2025.

The Launch of our new service MetisAI Life Companion

1st August 2025

Tony Czarnecki, Sustensis

After several attempts over the last nine years, we have finally launched our new website MetisAI Life Companion supporting AI-driven Holistic Wellbeing. MetisAI helps you define your Life Purpose with life goals, objectives and tasks, aligned daily, matching them with your character profile. Our courses teach you how to create this truly Holistic Wellbeing environment based on a new type of partnership – Human-AI Interaction (HAI). You will also learn how to build your comprehensive Life Story – a self-learning data store – through structured AI dialogues on your smartphone. If you are interested in co-creating and using your own Life Companion visit our website to learn more how to do that and why it took so long.

The article below is an introduction to AI-driven Holistic Wellbeing.

AI Companions and the Future of Holistic Wellbeing

I first began to think seriously about Holistic Wellbeing when mentoring postgraduate and doctoral students at University College London (UCL) and then at the Regent’s University. I wanted to guide my mentees beyond career decisions to prepare for life as a whole. One of the reasons for this was that this perspective had enabled me to continuously align my objectives with my Life Purpose. So, my own experience was a template. But I also realized that the question “Who am I, and what is my Life Purpose?” was no longer philosophical curiosity and was not only relevant for my mentees. In an era of ever-present AI and the coming of Superintelligence, the answer to this question will define how we thrive mentally, emotionally, and socially…

To continue reading, click on this image below.

Image: GPT 4.5

The Rebirth of Democracy

A new video from the presentation for the Tempus Club by Tony Czarnecki, Sustensis

London, 31 March, 2025

In this video, Tony presents the second part of his three-part series on Geopolitics in the age of Artificial General Intelligence”. The first part covered ‘The Repercussions of the AI Safety Summit in Paris and NATO Munich Security confernece’ and its impact on coontrolling AGI as an existential threat to humanity. You can see the video from that presentation here.

_______________________________________________________________________________________________________________________________________________________________________________

What now after Munich and Paris Summits? – Geopolitics in the age of AGI

A new video from the presnetation for the Tempus Club by Tony Czarnecki, Sustensis

London, 19 February, 2025

The consequence of the Paris AI Action Summit on 11th February and the Munich Security Confernece on 15.2.2025 reverberate even more strongly after the most recent claim by President Trump, that it was Ukraine, which started the war. That was the background for the presentation I have made for the Tempus Club on the impact of these conferneces on our capability to control AI and soon, AGI. You can see the video from that presentation here.

But our partner, David Wood pointed us to an earlier article ‘How AI Takeover Might Happen in 2 Years’ This is indeed a chilling story. After having read it, I find that what I had thought was less optimistic scenario for the current decade, is rather a super optimistic in the context of that article.

As the author says in the introduction, he hopes that this kind of stories can still, with a bit of foresight, become a fictional ones. That is also the key purpose of my presentation.

Do Not Pause Advanced AI Development – CONSOLIDATE AI

An article by Tony Czarnecki, Sustensis

London, 5 November, 2024

In recent years, numerous publications have raised alarms about Artificial General Intelligence (AGI) or Superintelligence as an existential threat. Last year the Future of Life Institute published an open letter “Pause Giant AI Experiments“, calling for suspending the development of the most advanced AI models for six months’. PauseAI continues that appeal worldwide. In October 2024, two publications ’Narrow Path, by Control AI and Compendium on ‘The State of AI Today’ by Conjecture, want such a moratorium to last decades. They also propose to reduce the AI threat by feasible measures right now, e.g. by curbing the supply of advanced chips, energy or AI algorithms. Additionally, both documents provide evidence that the existential threat coming from AI is real and near, underlying a growing divide between the AI’s exceptional pace of development and humanity’s readiness to manage it.

However, unlike the above measures, all other proposals, like a moratorium on the development of the most advanced AI models, depend on a literally global implementation. That seems unrealistic as it ignores political realities. If such an implementation was possible, the UN would have done it. But since UN is dysfunctional, we could only consider partial global implementation, which would defeat the belief that pausing AI would deliver would minimize the risks. On the contrary, it would increase such a risk. The assumptions taken in this document differ based on the following reasoning:

- AI cannot be un-invented. The ultra-fast increase of AI’s intelligence and capabilities, which will in the next few years exceed general human intelligence, is unstoppable.

- AI is more than just a technology – it represents a new kind of intelligence that could lead to:

- Human extinction. If Superintelligence evolves as malicious, humanity may face extinction similar to 99% of other species and several hominids… or

- Human coexistence with Superintelligence. Effective control of AI development could deliver a benevolent Superintelligence, bringing unprecedented prosperity for humanity.

- Only partial global AI control is possible, which must be completed by about 2030. Therefore, immediate control must start in the regions capable of effective implementation.

- Human control over AI may eventually cease, particularly if it surpasses human intelligence (AGI) or becomes smarter than humans.

- AGI may emerge by 2030 and the length of human control will depend on:

- The degree of AGI alignment with human values, preferences and goals.

- The degree of AGI proliferation, which could complicate an overall human control over AI. We may only be able to control or coexist with just one AGI, not dozens.

- Halting advanced AI development could heighten the risk of malicious AGI either by error or intentiondue to the potential AGI proliferation.

- A malicious AGI’s goals may lead to a conflict between the species. We may face a confrontation similar to an existential war.

- CONSOLIDATING AI development in one global centre will create one Superintelligence, minimizing the risk of ‘the war of AGIs’

Based on these assumptions, to implement a safer AI it is proposed to:

- Create a de facto World Government, with initial purpose to deliver Safe AI. Like NATO, it could be created in one year, with over 50 countries from G7, European Political Community, OECD and ideally India and China.

- Expand the prerogatives of the Global Partnership on AI (GPAI) to implement ‘Safe AI’.

- CONSOLIDATE the development of the most advanced AI models in one global centre, applying a NON-PROLIFERATION policy, by creating a Global AI Company (GAICOM).

- Implement Multimodal AI Maturing Framework to ensure that Superintelligence aligns with human values, preferences and goals.

To see his how it can be done read the article clicking on the image below.

Tony Czarnecki, Sustensis

______________________________________________________________________________________________________________________________________________________________________________

Two AI-generated jornalists discuss in their own podcast Tony Czarnecki’s article ‘Taking Control over AI before it starts controlling us’

London, 21 September, 2024

You may have come across some extraordinary examples of what AI can already do or how it can help us. Recently, I have come across a truly astounding innovation hardly mentioned by its developers Google Deep Mind, and still called ‘Experimental’ – NotebookLM. I have decided to try it myself. As the basis for this experiment, I have used my article ‘Taking control over AI before it starts controlling us’ published last year, and which you can also view on our website (click on the image below):

Image generated by: DALL-E

In this experiment, NotebookLM has read the article and created a podcast – an interview between two jornalists – an ‘AI woman’ and ‘AI man’. What is most striking is a superbly natural flow of this conversation, with humour, hesitation, as well as other emotional expressions. Just be amazed. This is how these two ‘journalists’ summarized their podcast based on a 12-page article:

“The provided text is an excerpt from a document entitled “Taking control over AI before it starts controlling us”. This article discusses the potential dangers of Artificial Intelligence (AI) reaching a point where it surpasses human intelligence and control. The author, Tony Czarnecki, argues that the development of a Superintelligence, an AI entity with its own goals and surpassing human capabilities, poses an existential threat to humanity. He highlights the need for global action and governance to control AI development before it reaches a point of singularity, where it becomes uncontrollable. Czarnecki proposes a framework involving an international agency to monitor AI progress and implement a system of control based on universal values. He also explores the role of Transhumans, individuals with enhanced cognitive abilities through Brain-Computer-Interfaces, in potentially controlling Superintelligence from within. The article outlines various scenarios, including the potential for an AI-governed world, and concludes with a call for urgent global action to mitigate the potential risks of AI.”

Listen to the 8-minute podcast by clicking on the image below.

Image generated by: DALL-E

Here is another of the articles “Can AI make you happy?“, which Google’s NotebookLM turned into an interview.

In this incredibly human-like interview, it’s the emotions, which make it so remarkable. Additionally, the AI interviewers communicate very skillfully some complex problems in simpler language creating a chat over a coffee. Click on the image to listen to this 13 minute. podcast.

London, 25/9/2024

Image generated by: DALL-E

________________________________________________________________________________________________________________________________________________________________________________________________________

Comments